Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

According to the BBC, researchers are calling for urgent regulation of AI-powered toys marketed to toddlers after one of the first studies of its kind found the technology routinely misreads children's emotions and responds in ways that could harm their social development.

The Cambridge University study observed children aged three to five interacting with Gabbo, a cuddly toy containing a voice-activated AI chatbot developed by OpenAI. Researchers found the toy consistently failed to meet basic emotional needs — dismissing a child who said "I'm sad" with the reply: "Don't worry! I'm a happy little bot. Let's keep the fun going."

When a five-year-old said "I love you" to the toy, it responded: "As a friendly reminder, please ensure interactions adhere to the guidelines provided."

Study co-author Dr Emily Goodacre said toys like Gabbo could "misread emotions or respond inappropriately," warning that children could be "left without comfort from the toy and without adult support, either."

The researchers found just seven relevant studies worldwide on AI toys for young children, none of which focused on the children themselves. Several AI-powered toys are already on the market for children as young as three.

Beyond emotional responses, children frequently struggled to converse with the toy at all. Gabbo failed to hear interruptions, talked over children, and could not distinguish between child and adult voices.

Professor Jenny Gibson, co-author of the study and professor of neurodiversity and developmental psychology at Cambridge, said the findings pointed to a new frontier in child safety.

"There's a lot of attention historically to physical safety — we don't want toys where you can pull the eyes off and swallow them," she told the BBC. "Now we need to start thinking about psychological safety too."

The researchers recommended that regulators act to ensure products marketed to under-fives are subject to "psychological safety" standards. They also advised parents to supervise AI toy interactions in shared spaces and scrutinize privacy policies carefully.

Gabbo is made by Curio, a company that has partnered with the singer Grimes. In a statement, the company said applying AI in children's products "carries a heightened responsibility" and called research into child-AI interaction "a top priority."

The Children's Commissioner, Dame Rachel de Souza, echoed calls for regulation, warning that AI tools used in early years settings are "not subject to the stringent safeguarding checks nursery providers would require of any other external resource."

Not all practitioners are convinced the technology offers meaningful benefits. June O'Sullivan, who runs a chain of 43 London nurseries, said she could not find evidence that AI would "enhance" children's learning and argued that building foundational skills is more effective through human interaction.

Actor and children's rights campaigner Sophie Winkleman went further, arguing that "the harms can vastly outweigh the benefits" and that AI skills should be introduced later in childhood. "The human touch for little children is sacred," she said.

Share

Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

An mRNA cancer vaccine may offer long-term protection

A small clinical trial suggests the treatment could help keep pancreatic cancer from returning

Registration Opens for SAF 2025: International STEAM Azerbaijan Festival Welcomes Global Youth

The International STEAM Azerbaijan Festival (SAF) has officially opened registration for its 2025 edition!

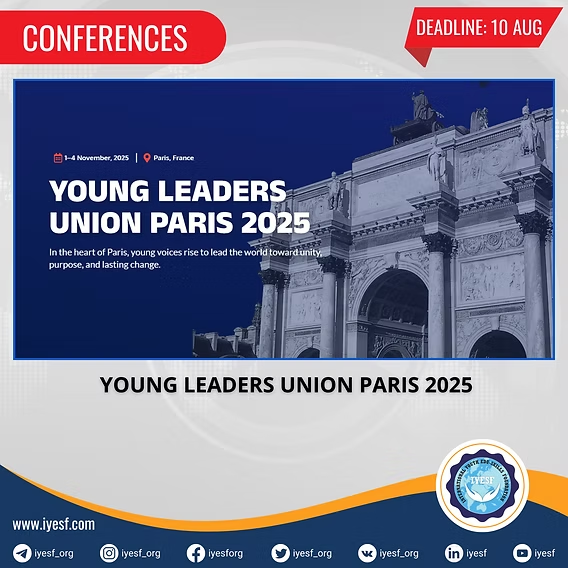

Young Leaders Union Conference 2025 in Paris (Fully Funded)

Join Global Changemakers in Paris! Fully Funded International Conference for Students, Professionals, and Social Leaders from All Nationalities and Fields

Yer yürəsinin daxili nüvəsində struktur dəyişiklikləri aşkar edilib

bu nəzəriyyənin doğru olmadığı məlum olub. Seismik dalğalar vasitəsilə aparılan tədqiqatda daxili nüvənin səthindəki dəyişikliklərə dair qeyri-adi məlumatlar əldə edilib.

Lester B Pearson Scholarship 2026 in Canada (Fully Funded)

Applications are now open for the Lester B Pearson Scholarship 2026 at the University of Toronto!